By Jason Bedrick & Corey DeAngelis |

This week’s erroneous attack on Arizona’s popular Empowerment Scholarship Accounts (ESAs) is another example of how biased reporting is misleading lawmakers and the public.

When the Arizona Auditor General last week released its Single Audit Report on the state for fiscal year 2024, Craig Harris of Channel 12 News had another fairy tale ready for viewers and readers. The ESA program, he claimed, is “plagued by weak controls, questionable spending, and internal management failures.”

No mention was made of the Arizona Department of Education’s recent finding that only 2% of ESA funds were spent on unallowed items (mostly innocent errors like backpacks and lunch boxes), and only 0.3% of ESA funds were spent fraudulently.

Harris’s central numerical claim — repeated on social media and amplified by Democratic lawmakers and education-establishment activists within hours — was that the auditors had found a “34% misspending” rate in a “random” sample of ESA purchases.

Both halves of that claim are false. And the falsehoods are not minor.

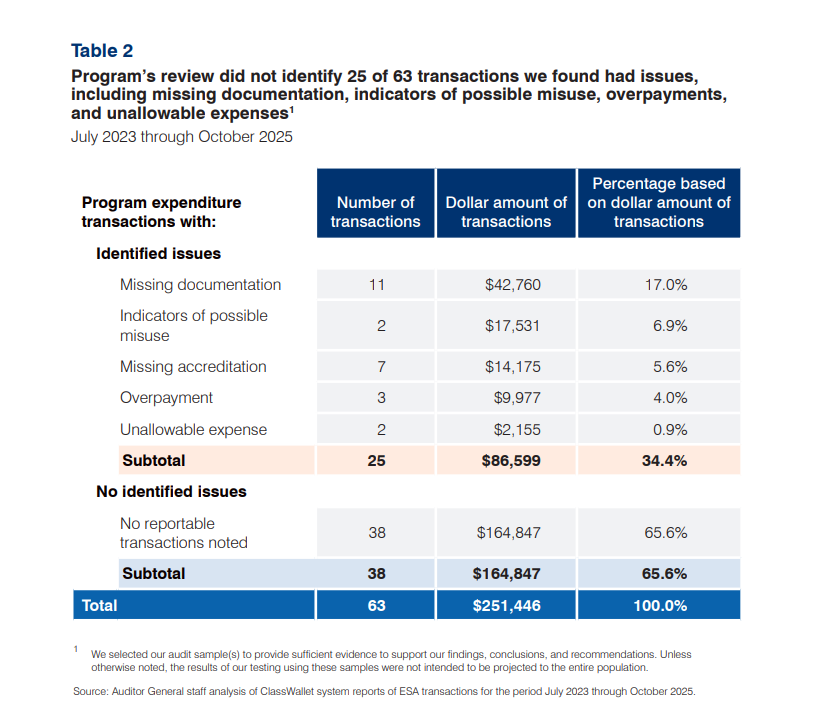

Start with “random.” The Auditor General’s report describes the relevant sample in unambiguous language: “we judgmentally selected 63 expenditure transactions for review occurring between July 2023 and October 2025 totaling $251,446.” [Emphasis added.]

A footnote on the same page adds, for the benefit of any reader who might be tempted to make the mistake that Harris did: “We selected our audit sample(s) to provide sufficient evidence to support our findings, conclusions, and recommendations. Unless otherwise noted, the results of our testing using these samples were not intended to be projected to the entire population.” [Emphasis added.]

Judgmental sampling and random sampling are not synonyms. They are distinct methodologies with distinct inferential properties. A random sample can be projected to a population; that is its entire purpose. A judgmental sample cannot, which is why auditors use it to probe suspected weaknesses rather than measure their prevalence.

In this case, the auditor general was testing the robustness of the Arizona Department of Education’s review process, not trying to determine the prevalence of misspending in the ESA program.

More responsible journalists, such as Garrett Archer of ABC 15, made sure to clarify that the auditor general’s findings were not generalizable to the entire program.

In other words, Harris completely misrepresented the auditor general’s methods and findings. That is sloppy at best, dishonest at worst.

Not only is the “34% misspending” figure not generalizable, it’s also not all misspending.

The 34.4% figure comes from dividing $86,599 in flagged transactions by the $251,446 sample. But Table 2 of Finding 2024-04 breaks those flagged transactions into five categories, and only two of them — “unallowable expense” ($2,155) and “overpayment” ($9,977) — involve money the program should not have disbursed.

The other three — missing documentation ($42,760), missing accreditation ($14,175), and “indicators of possible misuse” ($17,531) — are paperwork and compliance gaps. A tutor’s accreditation certificate that wasn’t uploaded is not the same thing as a misspent dollar. The actual confirmed misspending share within the sample (combining 0.9% unallowable expenses plus 4% overpayments) is about 4.9%, not 34%. Moreover, as the report concedes, even the 4.9% figure cannot be projected to the entire program.

In short, Harris conflates paperwork issues with misspending and treats a non-generalizable sample as generalizable, even though the auditor general warned readers not to do exactly that. Then Harris’s manufactured anti-ESA talking points are repeated ad nauseum by politicians and political activists.

And that appears to be the point. Harris’s clumsy crusade against school choice, after all, is not new.

Harris built an entire investigative series on a Department of Education internal review that supposedly reported a 20% misuse rate — except the internal review, like the auditors’ sample, was not designed to be projected. Harris projected it anyway.

When the same Department then produced a separate analysis suggesting misuse was minimal, Harris turned around and faulted that study for over-generalizing from its sample. For Harris, non-generalizable findings become generalizable when they damage ESA. Generalizable findings become non-generalizable when they don’t.

The convenient feature of this method is that the error always points the same direction. A reporter who genuinely struggled with the statistics of audit sampling would make mistakes in both directions over time. Harris’s don’t. They cluster.

And they remain uncorrected. Harris’s original 20% claim has never been retracted. The “random sample” language and the 34% framing are now circulating through campaign statements, legislative press releases, and social media posts, citing Harris’s distorted reading of the Auditor General report.

One cannot help but notice that Harris’s manufactured anti-ESA talking points come at a moment when anti-ESA groups are gathering signatures for two ballot initiatives to curb and regulate the ESA program. One also cannot help but wonder whether the downstream political effect is more than incidental to the reporting.

The Auditor General’s findings on ESA are real and worth engaging on their own terms. The program’s risk-based audit methodology is likely better than any other program in the state, but it could still be improved. The auditor has some substantive criticisms, and ADE will have to answer them.

Arizonans deserve honest reporting on those findings, not statistical fictions dressed up as “journalism.”

Jason Bedrick is a Senior Research Fellow and Corey DeAngelis is a Research Fellow at The Heritage Foundation’s Center for Education Policy.